Let a pebble slip from your hand and it falls straight to the ground. Toss the pebble sideways, and it traces a parabolic arc through the air. Imagine throwing the pebble sideways with even more speed. It lands further away. Imagine throwing the pebble with such great velocity that the surface of the Earth begins to curve away beneath it as it falls. In the absence of air friction, a pebble thrown sideways with sufficient velocity will fall in such a way that the Earth curves continuously out from underneath. The pebble falls endlessly without ever touching the ground. It is in orbit.

The idea that an orbit is the state of a body in continual free-fall can be traced to the 1600s, and was first stated in print by Robert Hooke, whose paper entitled, “The Inflection of a Direct Motion into a Curve by a Supervening Attractive Principle” was read to the Royal Society on May 23rd 1666. Robert Hooke’s fame and reputation have spent the last three hundred and twenty years in Newton’s shadow, but he was a tremendously inventive scientist, and indeed, was one of the founders of what we now consider the scientific method. (See, for example, the recent Hooke biography, “The Forgotten Genius” by Stephen Inwood). Hooke, drawing on the earlier ideas of William Gilbert and Jeremiah Horrocks, and profiting from conversations with fellow Royal Society member Christopher Wren, realized that if the Sun exerts an attractive force on bodies in space, then “all the phenomena of the planets seem possible to be explained by the common principle of mechanic motions.” Hooke had an intuitive (but non-mathematical) understanding of the the orbit in the sense described in the paragraph that opens this post.

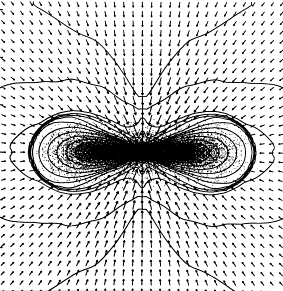

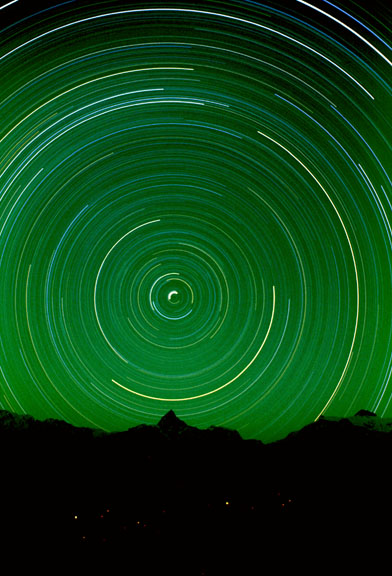

Robert Hooke was shouldered with a bewildering variety of interests and responsibilities. One of his many jobs was to produce weekly demonstrations for the entertainment and edification of the Royal Society. In order to illustrate his concept of the planetary orbit, he devised a demonstration that provided a suggestive analogy. A bob was placed on a long string pendulum. Tension from the string provided a central attractive force, and a sideways push provided the requisite tangential motion. When given a sideways push of exactly the correct speed, the bob would swing in a circle. When started at other speeds, it traced an elliptical path. With this simple device, Hooke was able to illustrate how an orbit is a compound of tangential motion and an attractive radial force.

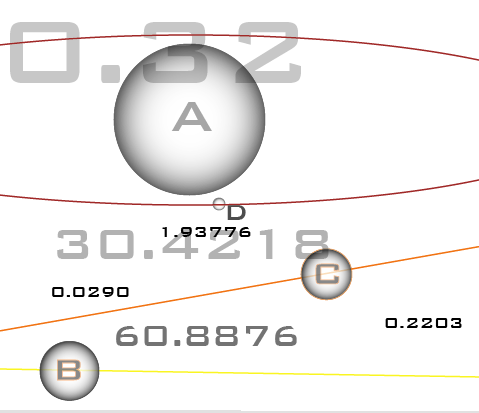

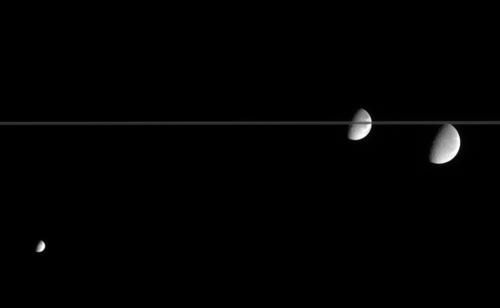

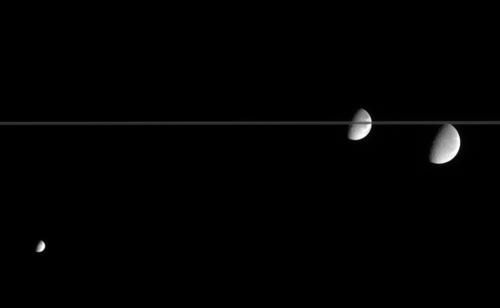

Hooke then made the analogy more elaborate by attaching two bobs to the end of the string. Once set in motion, the two bobs would orbit each other, while their center of mass orbited the center of attraction:

In 1670 , Hooke delivered a Cutler lecture at Gresham College, entitled, “An Attempt to Prove the Motion of the Earth by Observations”. The written version of this lecture contains three remarkable postulates, including, (1) a specification of the concept of universal gravitational attraction, that is, that mass attracts mass, (2) the assertion that all bodies “that are put into a direct and simple motion would continue to move in a straight line unless deflected”, and (3) the hypothesis that the attractive gravitational force falls off with distance. Taken together, these ideas are a remarkably correct qualitative formulation of the foundations of gravitational dynamics. Had Hooke been equipped with the mathematical skill to express his three ideas quantitatively, he would have gone very far indeed.

At the same time that Hooke was demonstrating his pendulum analogy to the Royal Society, Isaac Newton was nearing the close of his Anni Mirabiles. By 1666, Newton, who was working in total isolation, had found a quantitative model that explained the circular orbit, and also showed that gravity is manifested by an inverse square law of attraction.

I began to think of gravity extending to the orb of the Moon, & (having found out how to estimate the force with which a globe revolving within a sphere presses the surface of the sphere) from Kepler’s rule of the periodical times of the Planets being in sequialterate proportion of their distances from the center of their Orbs, I deduced that the forces which keep the Planets in their Orbs must [be] reciprocally as the squarres of their distances from the centers about which they revolve: & thereby compared the force requisite to keep the Moon in her Orb with the force of gravity at the surface of the earth, & found them to answer pretty nearly. (All of my Newton quotes are drawn from Richard Westfall’s “Never at Rest — A Biography of Isaac Newton“)

Here’s what Newton is saying. Kepler’s Third Law holds that the orbital period of a planet is proportional to the semi-major axis of its orbit to the 3/2 power, that is,

For the simplified case of a satellite in a circular orbit, the semi-major axis, a, is just the orbital radius, i.e. a=r. In Newton’s state of understanding in 1666, the “centrifugal” outward force an orbiting satellite must cancel the inward force exerted by gravitational attraction from the central body. The gravitational attraction is assumed to be spherically symmetric and to fall of with some power of the distance. That is,

where x needs to be determined. The fact that distance is rate multiplied by time implies that

and therefore

This means that

and if Kepler’s third law is to be satisfied, then x=2. Newton had realized that Kepler’s third law implies that gravity is an inverse-square force.

Newton had thus found a workable mathematical model for the circular orbit in 1666, but at that time, he was behind Hooke in terms of his intuitive understanding of the actual physical situation. Newton’s initial conception of the orbit was one of a mechanical equilibrium, in which an innate tendency to recede during circular motion is balanced by a gravitational attraction. In reality, Hooke’s concept of the orbit as the state of continual free-fall, a state of disequilibrium, is the correct notion.

On Nov. 24, 1679, Hooke, in his capacity as the secretary of the Royal Society, wrote a letter to Newton in order to solicit a discussion of orbital dynamics. Hooke was likely quite proud of his theories concerning orbital motion, and he may well have been eager to bring his ideas to Newton’s attention.

Let me know your thoughts of that of compounding the celestaill motions of the planets of a direct motion by the tangent and an attractive motion towards the centrall body.

Hooke had no way of knowing that Newton had already thought carefully about orbits. It is likely that as soon as Newton saw Hooke’s phrase, he immediately saw that it represented an improved qualitative conception of orbital motion. He quickly wrote back to Hooke, and politely declined the offer of an extended dialog. He, was, he said, too busy with other studies.

And having thus shook hands with Philosophy, & being also at present taken of with other business, I hope it will not be interpreted out of any unkindness to you or the R. Society that I am backward in engaging my self in these matters…

Nevertheless, the correspondence between the two continued through several more letters. By January 1680, Hooke had managed to guess (on the basis of two incorrect arguments that combine by chance to give a correct formula) that the gravitational force obeys an inverse square law. What he could not prove, however, was what the general path of an orbiting planet is an ellipse (that is, he could not go beyond the special case of the circular orbit). The fact that the planetary orbits have elliptical figures was then known empirically from Kepler’s First Law, which states that planetary orbits are ellipses with the Sun at one focus.

On January 6th, 1680, Hooke took the liberty of informing Newton of the inverse square law, “My supposition is that the Attraction always is in a duplicate proportion to the Distance from the Center Reciprocall…”, and in a letter on the 17th of January, he further urged Newton to find the general mathematical description of a planetary orbit,

I doubt not but that by your excellent method you will easily find out what that Curve must be, and its proprietys, and suggest a physicall Reason of this proportion.

Hooke’s letters of November through January of 1679-80 seem to have greatly annoyed Newton. With his formidable intuition and dismal mathematical skills, Hooke was steadily blundering toward the pedestal where he could claim the renown of explaining the “System of the World”. Without informing Hooke, Newton carried out (in early 1680) a marvelous derivation that showed that the path of a planet is an ellipse, and simultaneously proved Kepler’s Second Law, which states that the radius vector of a planet sweeps out equal areas in equal times, and which is a statement of the principle of the conservation of angular momentum.

Years later, soon after the appearance of the Principia, when Hooke claimed that Newton had plagiarized his ideas, Newton lashed out at Hooke in a letter to Edmund Halley:

Should a man who thinks himself knowing, & loves to shew it in correcting & instructing others, come to you when you are busy, & notwithstanding your excuse, press discourses upon you & through his own mistakes correct you & multiply discourses & then make use of it, to boast that he taught you all he spake and oblige you to acknoledge it & cry out injury and injustice if you do not, I beleive you would think him a man of a strange unsociable temper.

Robert Hooke, that man of strange unsociable temper, is nevertheless a man after my own heart. Newton is so far out on the curve that I can’t relate to him at all. I have no concept of how his mind operated, but Hooke, Hooke would be a fantastic person to have a beer with. I can appreciate the way he thought by analogy. I greatly admire his demonstrations, even if they aren’t fully rigorously correct.

With Hooke’s approach in mind, let’s look at some elliptical orbits. When an ellipse has zero eccentricity, the two foci come together, and the ellipse is a circle. A planet on a circular orbit travels at constant speed, and its positions at a hundred equally spaced time intervals are equally spaced:

When the eccentricity reaches 0.1, the orbit looks very much like a shifted circle. When the planet is closest to the Sun (the point known as perihelion), it is moving faster. If you look carefully, you can see that the dots are spaced just a bit more sparsely on the right side than on the left. The e=0.1 orbit just below is very similar to the orbit of Mars, which has an eccentricity e=0.0935. In all of the following figures, the e=0 circular orbit is also shown for comparison:

Among the eight major planets in our Solar System, Mercury, with e=0.205, has the most eccentric figure. Mercury’s orbit is almost identical to the orbit in this plot, which has e=0.20:

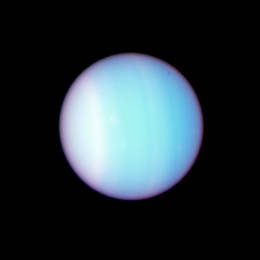

Planets “c” and “d” of the Upsilon Andromedae system have eccentricities of e=0.27 and e=0.28 respectively. Their orbital figures are quite close to this orbit, which has e=0.3:

70 Vir b was one of the first extrasolar planets to be discovered. It has a very well defined orbit with e=0.4:

When e=0.5, it’s impossible to mistake the ellipse for an off-center circle. The extrasolar planet GJ 3021 b has an eccentricity e=0.511, which is close to e=0.5:

At an eccentricity e=0.6, the brevity of the periastron swing is highly pronounced:

When e=0.7, the planet is 5.667 times closer to the star at periastron than at apastron.

The extrasolar planet with the highest known eccentricity, HD 80606 b, has an 111 day orbital period and e=.938. See my article on this strange world posted last month. Because it dives in so close to its parent star, HD 80606 b is an interesting transit candidate, but a transitsearch.org campaign carried out last year did not detect transits. We’ll try again this year during the upcoming transit opportunities.

Do you give talks on extrasolar planets? High-resolution .pdf files of the above orbital plots are free here for the taking: e=0.0, e=0.10, e=0.20, e=0.30, e=0.40, e=0.50, e=0.60, e=0.70, e=0.80, e=0.90.

.

.