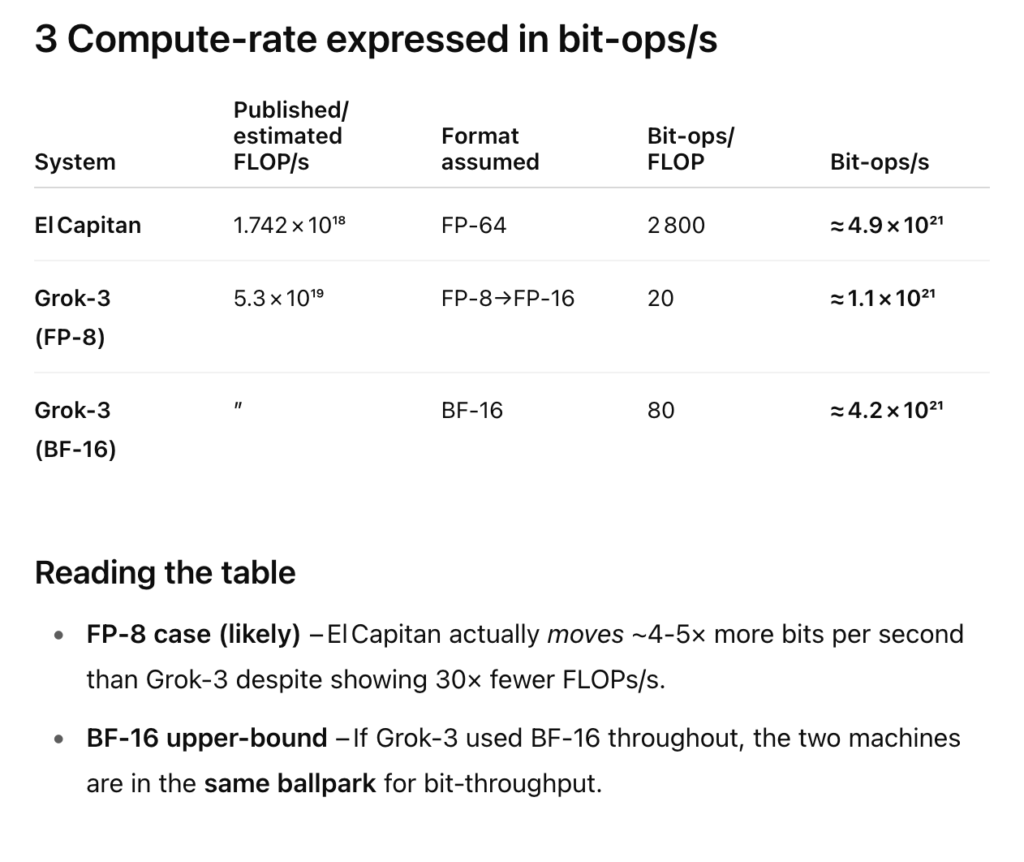

Sadly(?) the Top500 list just ain’t what it used to be. The semi-annual June 2025 update came out recently, and the HPE Cray EX255a, AMD 4th Gen EPYC 24C 1.8GHz, AMD Instinct MI300A, Slingshot-11, aka ‘El Capitan’ pushing out 1.742e+18 flop/s is effectively the Old Town Road of the creaky charts.

“The 65th edition of the TOP500 showed that the El Capitan system retains the No. 1 position. With El Capitan, Frontier, and Aurora, there are now 3 Exascale systems leading the TOP500. All three are installed at Department of Energy (DOE) laboratories in the United States.”

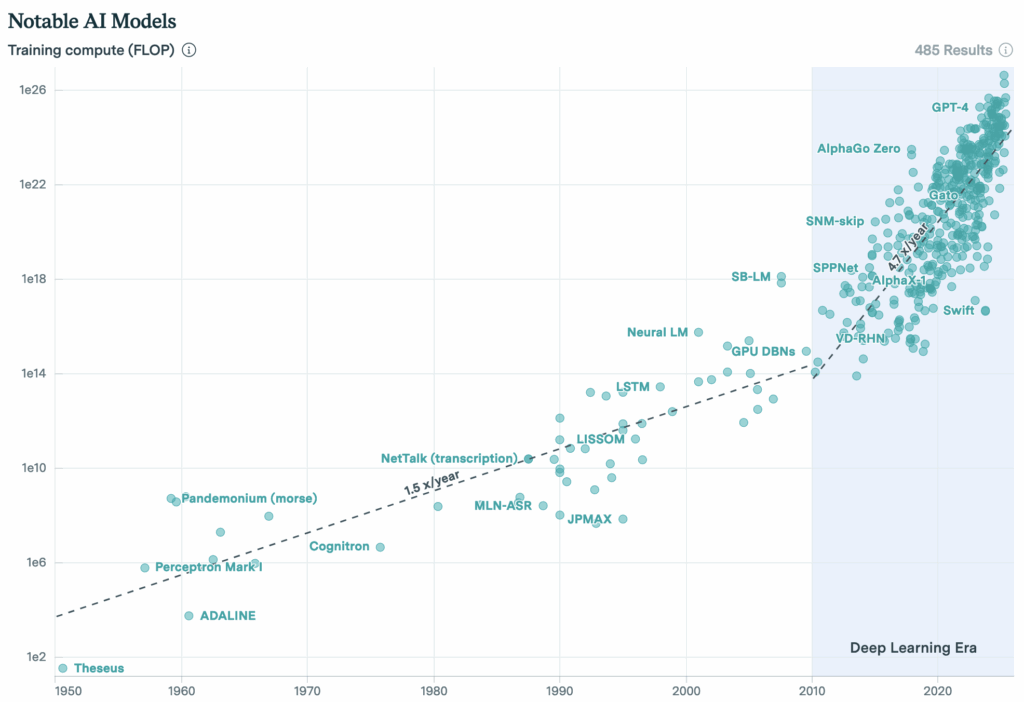

Meanwhile, look at the AI compute chart at epoch.org. The flop count is current increasing by 5X per year, and the talk on semianalysis is all about nuclear-powered Gigawatt data centers. A pre-training run for a model like GPT 4.5 uses of order 2e+26 flops over ~100 days. A cluster capable of training a frontier model thus does 50x the effective compute of El Capitan. Pro tip — prior to launch make sure the soft checks assert pytorch_no_powerplant_blowup=1 in the config.

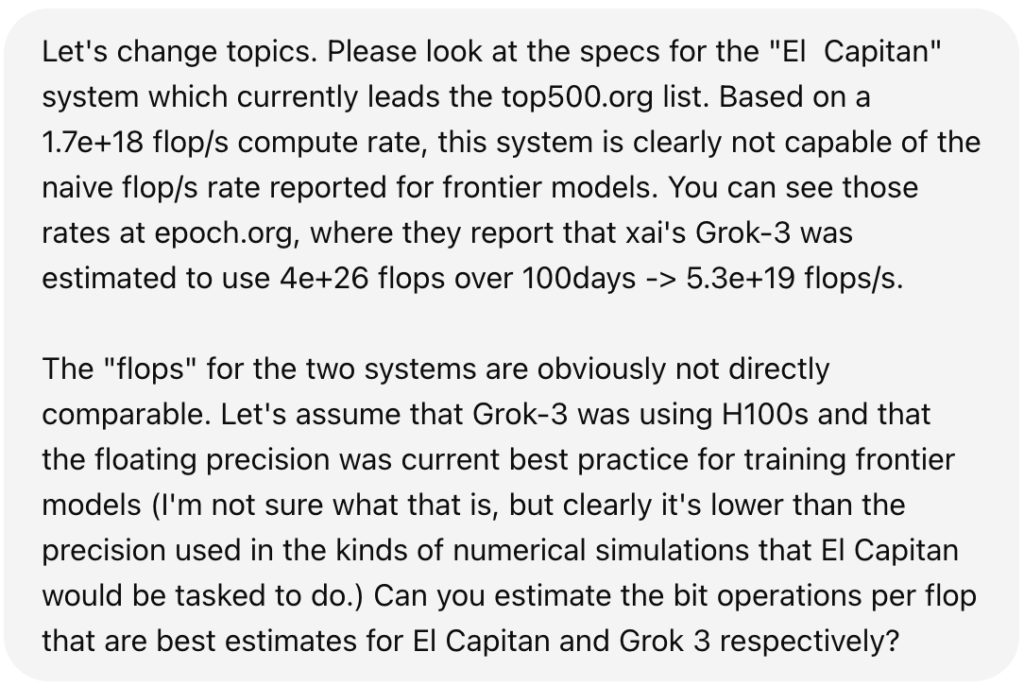

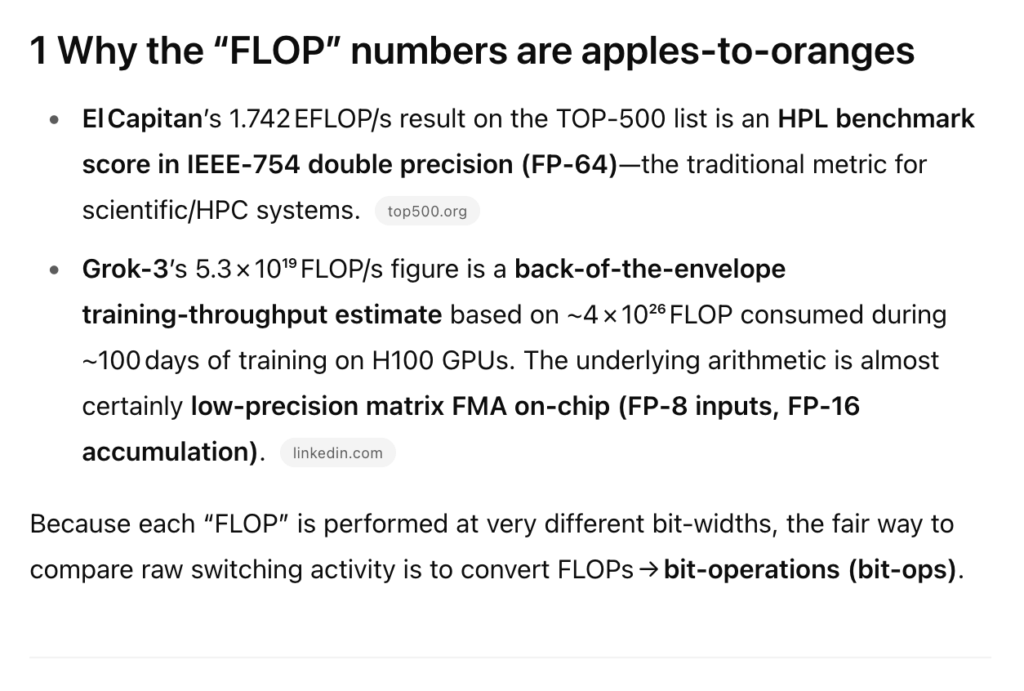

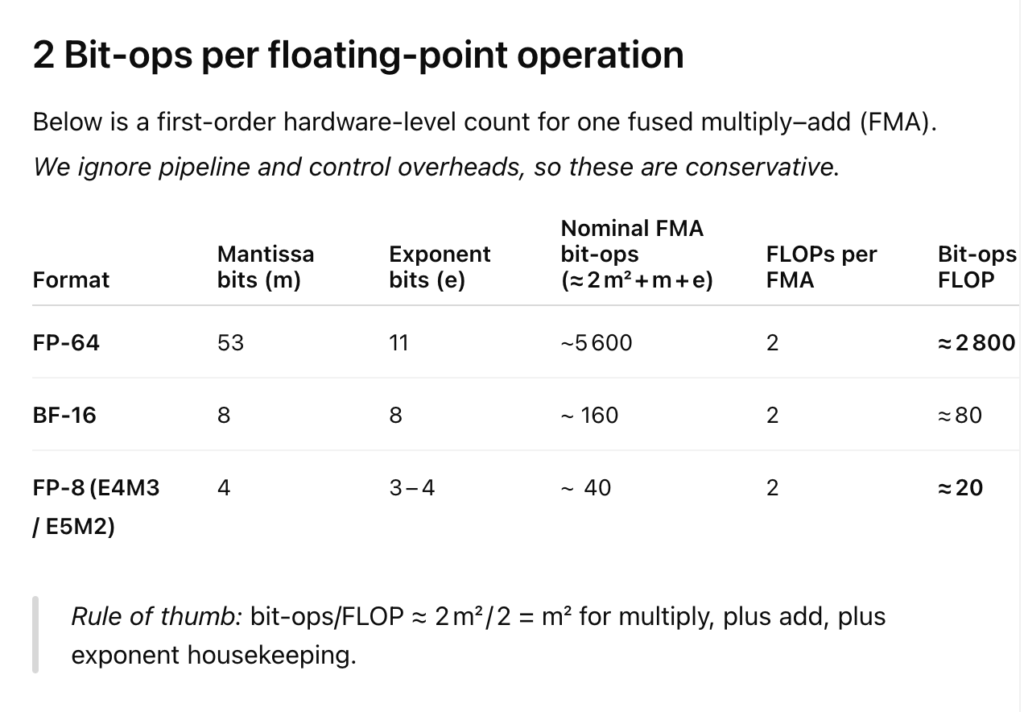

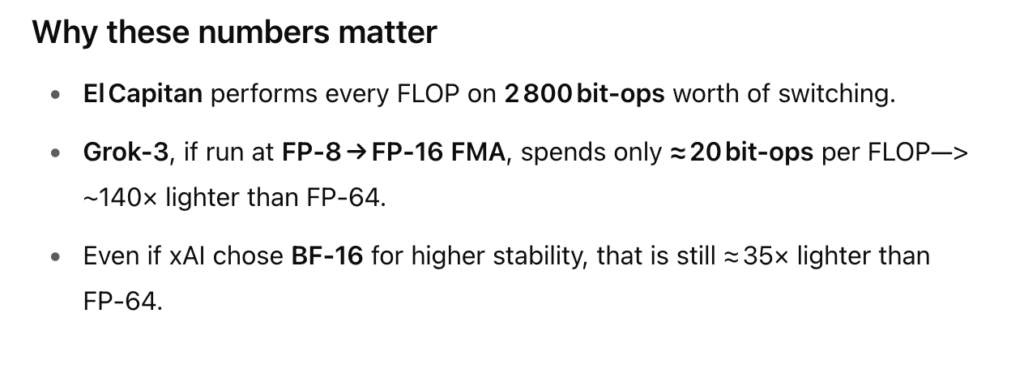

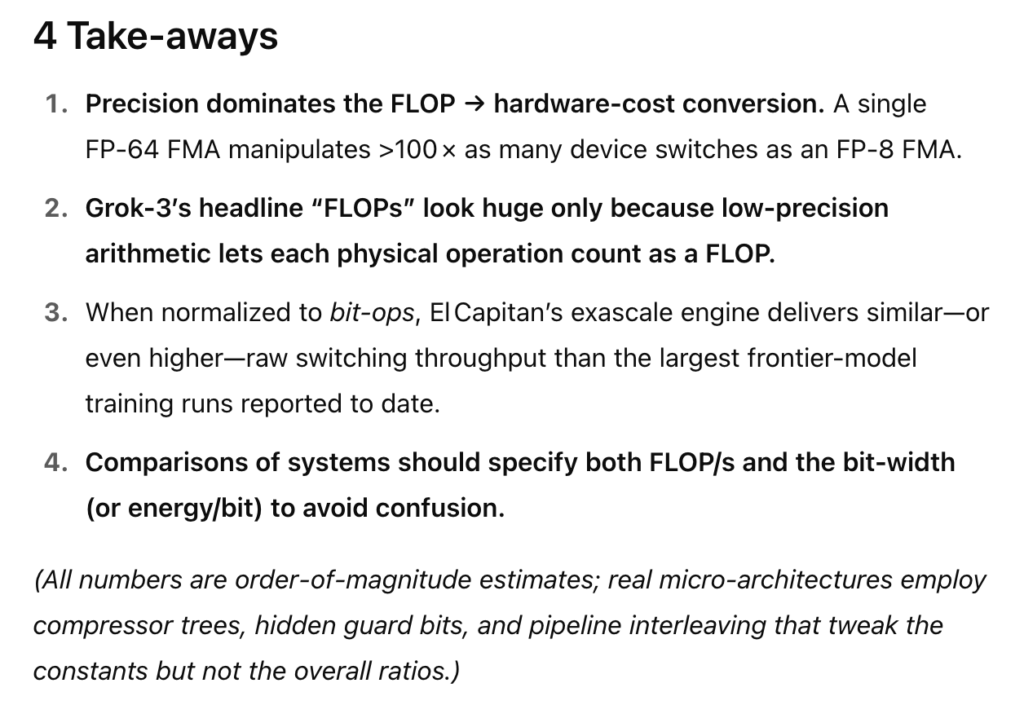

At this point, readers are likely howling with indignation. He’s going apples-to-apples with flops between two radically different computational paradigms! Johnny von Neumann rolls in his early grave.

Luckily, it’s open mic night at oklo.org. Let’s introduce o3-pro and let him get up on stage for a while:

Now I know this is a slippery slope. No oklo.org posts for over a month, and I’m just letting some rando AI hold forth at length about bit operations (which itself consumed about 10^17 bit operations).

I started writing posts about bit operations and large-scale computation a little over a decade ago. As part of those efforts, I tried to introduce a unit of computation. The effort gained zero traction in any community, but I’ll try again:

1 oklo = 1 bit operation per gram of system mass per second

I also asserted that things start to get interesting on a planet when the planet surpasses 1 oklo. Quoting this 2014 post:

Our planet has been heavily devoted to computation, not just for the past few years, but for the past few billion years. Earth’s biosphere, when considered as a whole, constitutes a global, self-contained infrastructure for copying the digital information encoded in strands of DNA. Every time a cell divides, roughly a billion base pairs are copied, with each molecular transcription entailing the equivalent of ~10 bit operations. Using the rule of thumb that the mass of a cell is a nanogram, and an estimate that the Earth’s yearly wet biomass production is 1018 grams, this implies a biological computation of 3×1029 bit operations per second. Earth, then, runs at 50 oklo.

How are the Magnificent Seven et al. doing when judged on the oklo metric? I would ballpark the current global burden of artificial computation at the equivalent of 30 million H100s, each running at 2e+15 flops BF16, so 100 bit operations per flop. That multiplies out to 6e+24 bit operations (10 moles) per second, and includes all the phones, all the CPUs, all the GPUs, everything. The mass of the Earth is 6e+27 grams, so artificial computation at this moment of update is running at 0.001 oklo, and is still 50,000X less important than the biosphere. This is consistent with the observation that on Google Earth, it’s still easy to find boreal forest, and its still hard to find data centers.

But likely not for long.